The first platform I looked at when starting to stream live was Instagram. Straight from the start it was obvious that Instagram wants you to use a phone. It needs to be upright and there is no out-of-the-box streaming solution for connecting streaming software from a PC. There are some software packages that allow you to stream from your PC to Instagram, like YellowDuck. These always need to jump through some hoops like authentication. I didn’t want to go there.

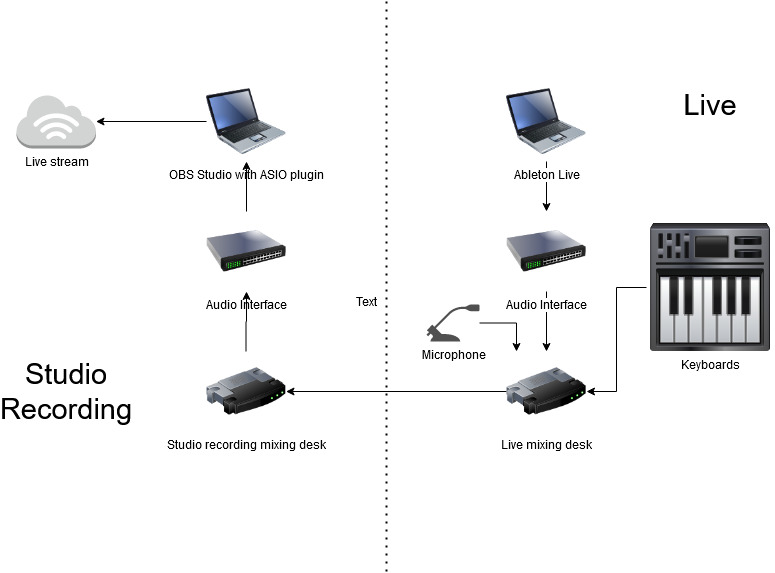

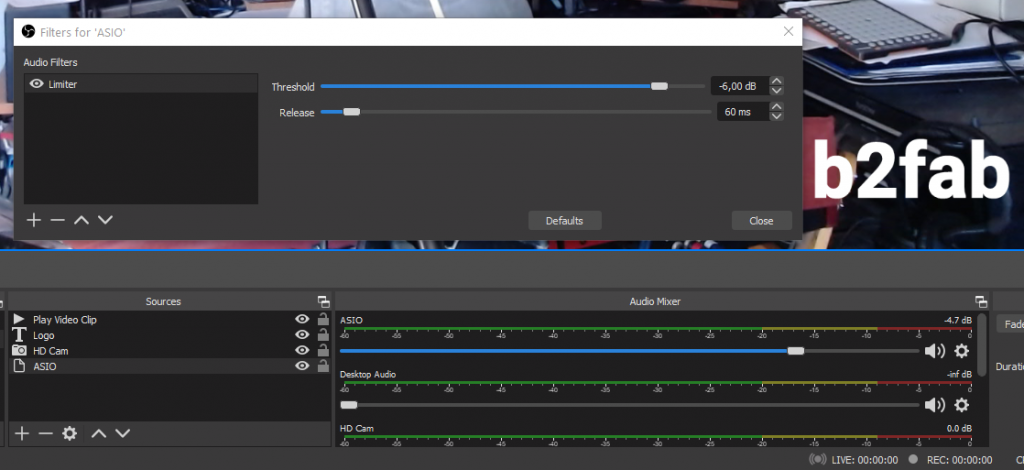

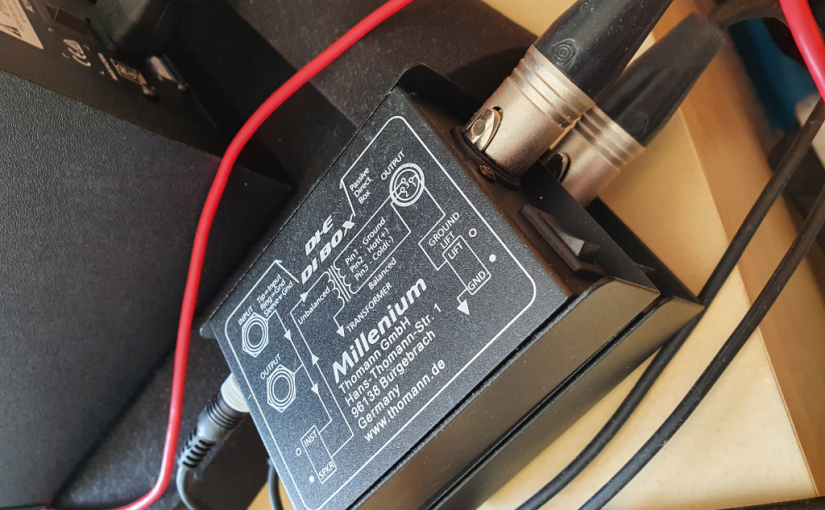

OK. Streaming from your phone seems to be the way for Instagram. In a previous post I explained that I want a good live audio quality. When live streaming, my starting point is the output from the mixing desk that I would connect to the PA when playing live in real life, if you know what i mean. So I feed the output of the live mixing desk directly into to the PC that streams to YouTube etc. Now for me the question is how to feed this into your phone. It could be very ‘live’ to use the microphone of the phone, but I could only see it lead to a noisy and garbled live show.

Fortunately, there are several ways to feed audio into your phone. Just like feeding the audio to a live streaming PC. Isn’t it amazing how phones have become kind of like the modern ultra portable PC? The bad news is that this time your cheap budget phone probably won’t cut it. You either need an iPhone or an Android phone above mid-range.

For an iPhone you can find plenty audio to lightning cables. If you want a bit more control you can use most of these iRig devices in the interface product section. Some of these have 2 inputs so they can act as some kind of live mixer for maybe a guitar and a microphone. For Android the situation is slightly more complex. You can check if your phone supports access to the audio by means of the USB C plug, or you can check if your phone supports OTG on its USB plug. If OTG is supported again most of the iRig devices will work like a charm.

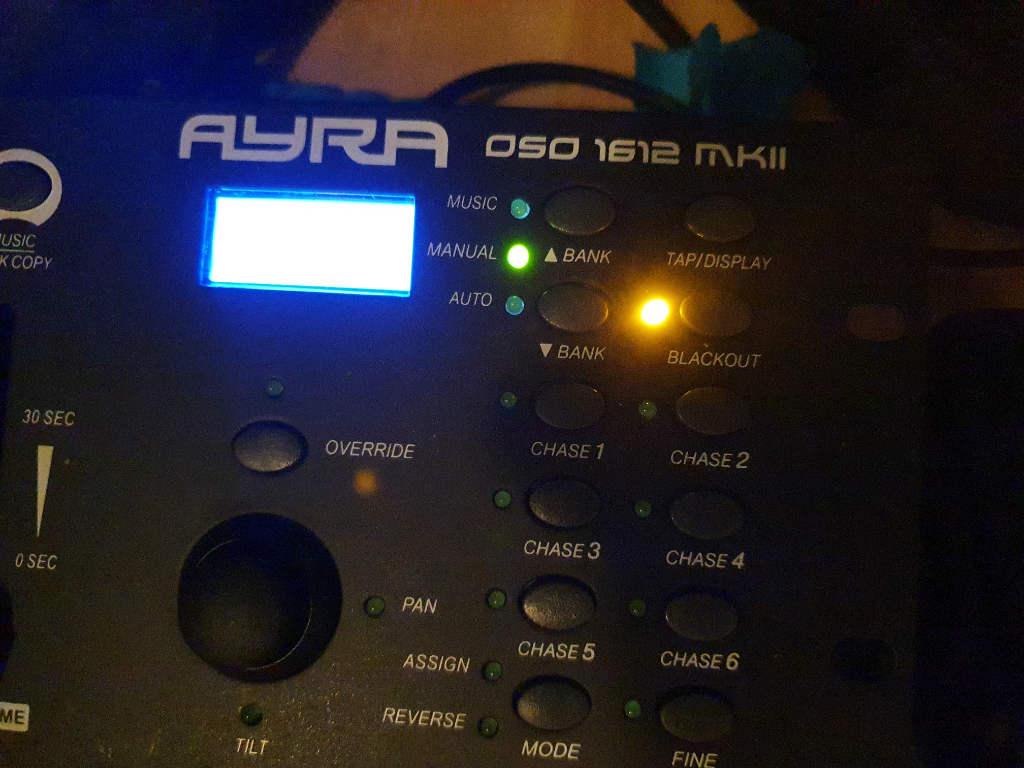

In my case the Samsung Galaxy S10 supports OTG. So the first thing I did was lookup all the iRig devices to see which one was most suitable. Then I came across the Zoom U-22 and U-24 devices. There I remembered that my Zoom H1n is actually also an audio interface. Then I tested if the Samsung Galaxy S10 recognized my Zoom H1n as an audio interface and boom! Instant success! No need to buy anything new. Then I got carried away, because my live mixer is also from Zoom and I connected my live mixing desk as an audio interface, but that didn’t work unfortunately. The phone crashed.

So this was the setup for my first Instagram live stream. A special OTG cable connects the USB port of the Zoom H1N with the phone. The Zoom H1n line in is connected to the mix output of the Zoom L-12 LiveTrak mixer. The first results were very promising. Unfortunately I could hear a quite audible hiss. It should tune the signal flow between the live mixer and the audio input. It could also be that the quality of the Zoom H1n as an audio interface is inadequate. Another downside is that you have to rely on the Zoom H1n batteries and/or your phone batteries. Maybe not a good idea if you want to do a live stream marathon. For my purposes now its OK. I hope you can now too join the flood of Instagram live streamers!